|

3/21/2024 0 Comments Metabase docs

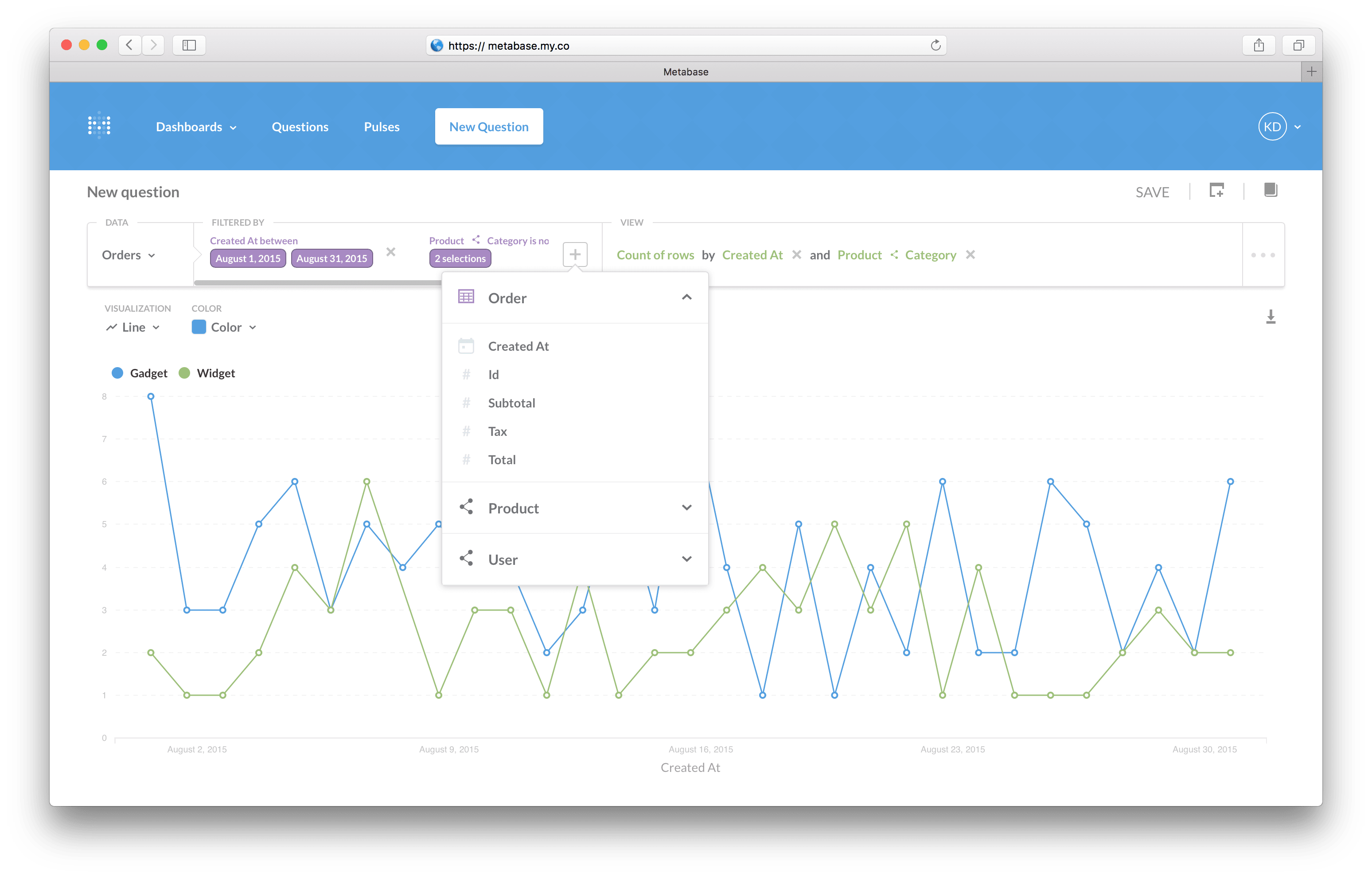

The data in BigQuery is nested, which dlt automatically normalizes on loading.īigQuery might store data in nested structures which would need to be flattened before being loaded into the target database. See the accompanying repo for a detailed step-by-step on how this was done. Load the data by simply running python bigquery.py. dlt/secrets.toml.Īdd a Python function inside bigquery.py that requests the data. This creates a folder with the directory structureĪdd BigQuery credentials inside. To also load data on stargazers, I had to modify the existing source.Įven though Bigquery does not exist as a dlt source, dlt makes it simple to write a pipeline that uses Bigquery as a source. BigQuery does not exist as a verified source for dlt, which means that I had to write this pipeline from scratch.ĭlt has an existing GitHub source that loads data around reactions, PRs, comments, and issues. Since all the Google Analytics 4 data is stored in BigQuery, I needed a pipeline that could load all events data from BigQuery into a local DuckDB instance. In this example, I created two data pipelines: The advantage of using dlt for data ingestion is that dlt makes it very easy to create and customize data pipelines using just Python. This is a perfect problem to test out my new super simple and highly customizable MDS in a box because it involves combining data from different sources (GitHub API, Google Analytics 4) and tracking them in a live analytics dashboard. The idea is to build a dashboard to track metrics around how people are using and engaging with dlt on different platforms like GitHub (contributions, activity, stars etc.), dlt website and docs (number of website/docs visits etc.). Previously, I was using only Google BigQuery and Metabase to understand dlt’s product usage, but now I decided to test how a migration to DuckDB and MotherDuck would look like. The example that I chose was inspired by one of my existing workflows: that of understanding dlt-related metrics every month. Open source, has support for DuckDB, and looks prettier than my Python notebook Ridiculously easy to write a customized pipeline in Python to load from any sourceįree, fast OLAP database on your local laptop that you can explore using SQL or pythonĭuckDB, but in cloud: fast, OLAP database that you can connect to your local duckdb file and share it with the team in company production settingsĪn amazing open source tool to package your data transformations, and it also combines well with dlt, DuckDB, and Motherduck

What does this MDS in a box version look like? Tool Thus I got a super simple and highly customizable MDS in a box that is also close to company production setting. This is usually challenging because, while exporting data from GA4 to BigQuery is simple, combining it with other sources and creating custom analytics on top of it can get pretty complicated.īy first pulling all the data from different sources into DuckDB files in my laptop, I was able to do my development and customization locally.Īnd then when I was ready to move to production, I was able to seamlessly switch from DuckDB to MotherDuck with almost no code re-writing! In my example, I wanted to customize reports on top of Google Analytics 4 (GA4) and combine it with data from GitHub. The first thing that I thought of was an approach to MDS in a box where you develop locally with DuckDB and deploy in the cloud with MotherDuck. I was already fascinated with dlt and all the other new tools that I was discovering, so reading about this approach of combining different tools to execute an end-to-end proof of concept in your laptop was especially interesting.įast forward to a few weeks ago when dlt released MotherDuck as a destination. As a Python user, being able to create a data pipeline, load the data in my laptop, and explore and query the data all in python was awesome.Īt the time I also came across this very cool article by Jacob Matson in which he talks about creating a Modern Data Stack(MDS) in a box with DuckDB. I started working in dltHub in March 2023, right around the time when we released DuckDB as a destination for dlt. TL DR: I combined dlt, dbt, DuckDB, MotherDuck, and Metabase to create a Modern Data Stack in a box that makes it very easy to create a data pipeline from scratch and then deploy it to production.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed